In the age of AI, data privacy and security have become crucial concerns as technology increasingly relies on vast amounts of personal information. This article aims to explore the complex relationship between data privacy and AI, highlighting the challenges faced in safeguarding sensitive data and proposing measures for ensuring its safe and responsible use. Read more

By analyzing ethical considerations, compliance with data privacy regulations, and the role of AI in enhancing security, this article seeks to provide an analytical perspective on how these issues intertwine.

The first paragraph sets the stage by emphasizing the significance of data privacy and security in the era of AI. It acknowledges that technology’s reliance on personal information has made these concerns paramount.

The second paragraph outlines what readers can expect from the article: a detailed exploration of challenges, proposed solutions, ethical considerations, compliance with regulations, and the role AI plays in enhancing security.

This introduction adheres to an academic style by being objective and impersonal while maintaining a meticulous approach towards analyzing these topics. Additionally, it engages readers by tapping into their subconscious desire for freedom by addressing concerns related to protecting their personal information.

The Relationship Between Data Privacy and AI

The relationship between data privacy and AI is a complex and evolving topic that requires careful examination to understand the implications of using personal data in artificial intelligence systems.

Data privacy plays a crucial role in the development and implementation of AI, particularly in machine learning algorithms that heavily rely on large amounts of data for training.

However, concerns regarding data breaches and unauthorized access to personal information pose significant challenges to the advancement of AI technology.

Data breaches not only compromise individuals’ privacy but also have far-reaching consequences on the development of AI systems.

They can lead to biased models or unethical practices if sensitive information falls into the wrong hands.

Therefore, ensuring robust data privacy measures is essential for maintaining public trust and facilitating responsible AI development.

Challenges in Data Privacy and Security

One of the major obstacles facing the protection of sensitive information in the digital era is the constant threat posed by unauthorized access and exploitation. To address these challenges, organizations need to implement robust security measures and establish effective privacy policies. This requires a comprehensive understanding of potential vulnerabilities and proactive strategies to mitigate risks.

Some key challenges in data privacy and security include:

1) Data breaches: With the increasing volume and value of data being stored, processed, and transmitted digitally, data breaches have become a significant concern. Cybercriminals exploit vulnerabilities in systems to gain unauthorized access to sensitive information, leading to severe consequences such as identity theft, financial losses, or reputational damage.

2) Evolving cyber threats: As technology evolves, so do cyber threats. Hackers are constantly finding new ways to exploit weaknesses in systems and networks. Organizations must stay updated on emerging threats and continually enhance their security protocols to counteract these evolving risks.

3) Compliance with regulations: Privacy laws vary across jurisdictions, making it challenging for organizations operating globally to comply with multiple regulations simultaneously. Adhering to different legal frameworks while maintaining consistent data protection practices can be complex but essential for avoiding penalties and maintaining trust.

4) Lack of awareness among users: Despite efforts made by organizations to protect user data, individuals themselves often contribute unknowingly or negligently towards compromising their own privacy. Users may unintentionally disclose personal information through social media or fall victim to phishing attacks due to lack of awareness about cybersecurity best practices.

To overcome these challenges, organizations should prioritize investing in advanced technologies like encryption algorithms, multi-factor authentication systems, intrusion detection systems (IDS), and employee training programs on cybersecurity awareness. Additionally, they should regularly review their privacy policies and ensure transparent communication with users regarding how their personal information is collected, used, shared, and protected.

By addressing these challenges comprehensively and proactively incorporating strong security measures into their operations, organizations can safeguard sensitive data from unauthorized access or exploitation.

Measures for Ensuring Safe and Responsible Use of Data

To ensure the responsible and secure utilization of information, it is imperative for organizations to adopt measures that promote ethical and accountable handling of data. One such measure is data anonymization, which involves removing personally identifiable information from datasets to protect the privacy of individuals. By implementing rigorous anonymization techniques, organizations can minimize the risk of unauthorized access or misuse of sensitive data. Additionally, obtaining user consent plays a crucial role in ensuring the safe and responsible use of data. Organizations should prioritize transparency and provide clear explanations regarding how user data will be used, giving individuals the opportunity to make informed decisions about sharing their information. This not only respects users’ privacy rights but also fosters trust between organizations and their customers. By incorporating these measures into their practices, organizations can strike a balance between leveraging valuable data for AI applications while safeguarding individual privacy concerns.

| Measure | Description |

|---|---|

| Data Anonymization | Technique used to remove personally identifiable information from datasets |

| User Consent | Obtaining explicit permission from users before collecting or using their personal data |

Ethical Considerations in AI and Data Privacy

Ethical considerations surrounding the use of artificial intelligence and safeguarding individuals’ personal information have become crucial in the digital era. As AI technology continues to advance, there is a growing concern about how data privacy is being protected and whether ethical standards are being upheld.

One of the key ethical considerations in this context is ensuring that individuals have control over their own data and understand how it will be used. This includes obtaining informed consent from individuals before collecting their data, as well as providing transparency about how the data will be processed and shared.

Additionally, it is important to address biases in AI algorithms that may perpetuate discrimination or unfair treatment of certain groups.

By carefully considering these ethical considerations, we can strive to create a society where AI technologies are used responsibly, respecting individual privacy and promoting fairness for all.

Compliance with Data Privacy Regulations

Complying with data privacy regulations is essential for organizations to ensure the protection of individuals’ personal information and maintain trust in the use of advanced technologies.

In the age of AI, where vast amounts of data are collected and analyzed, there are numerous compliance challenges that organizations must navigate.

One major challenge is understanding and keeping up with the constantly evolving landscape of data privacy regulations across different jurisdictions.

Organizations need to stay up-to-date on laws such as the General Data Protection Regulation (GDPR) in Europe and the California Consumer Privacy Act (CCPA) in the United States.

Another challenge is implementing robust data protection measures to safeguard personal information from unauthorized access or breaches.

This involves adopting encryption techniques, secure storage systems, and regular audits to ensure compliance with regulatory requirements.

Additionally, organizations must establish effective enforcement mechanisms to monitor and enforce data privacy policies within their operations.

This may include appointing dedicated privacy officers, conducting internal audits, and implementing disciplinary actions for non-compliance.

By addressing these compliance challenges and establishing strong enforcement mechanisms, organizations can uphold individuals’ rights to privacy while leveraging AI technologies responsibly and ethically.

The Role of AI in Enhancing Data Privacy and Security

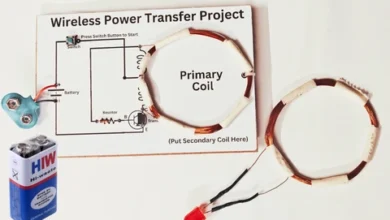

One significant aspect to consider when examining the impact of artificial intelligence on safeguarding individuals’ personal information is its ability to enhance data protection measures.

AI-driven encryption is a key tool in this regard, as it can significantly strengthen the security of sensitive data. Traditional encryption methods often require manual configuration and management, which can be time-consuming and prone to human error.

However, with AI algorithms, encryption can be automated, allowing for more efficient and accurate protection of data. AI can analyze patterns and identify potential vulnerabilities in real-time, enabling proactive measures to prevent unauthorized access or breaches.

Additionally, AI can adapt and learn from new threats, continuously improving the effectiveness of encryption algorithms. This enhanced level of data protection provided by AI not only mitigates risks but also instills confidence in individuals that their personal information is secure.

The Future of Data Privacy and Security in the Age of AI

The future of data privacy and security in the age of AI will be shaped by several key factors.

First, there will be a continued evolution of privacy regulations to keep up with the rapid advancements in AI technology. These regulations will aim to ensure that personal data is protected and individuals have control over how their information is used.

Second, there will be advancements in AI-driven security solutions that can detect and mitigate potential threats more effectively. This includes using machine learning algorithms to analyze patterns and identify anomalies in real-time.

Lastly, building trust and transparency in AI systems will be crucial for ensuring that individuals feel comfortable sharing their data with these technologies. This can be achieved through clear communication about how data is collected, stored, and used, as well as implementing mechanisms for accountability and oversight.

Overall, the future of data privacy and security in the age of AI requires continuous adaptation and innovation to address emerging challenges while safeguarding individual rights and interests.

Continued Evolution of Privacy Regulations

Continued evolution of privacy regulations has become a critical aspect in the discussion surrounding data privacy and security in the age of AI. In an evolving landscape where technology advances at an unprecedented rate, global impact is undeniable.

To address these challenges, governments and regulatory bodies have been working to establish comprehensive frameworks that protect individuals’ privacy rights while enabling the benefits of AI. The continued evolution of privacy regulations brings several key implications:

- Strengthened Data Protection: Privacy regulations are aimed at enhancing data protection measures, ensuring that personal information is handled securely and transparently. This includes imposing stricter requirements on organizations to obtain consent for data collection and processing activities.

- Increased Accountability: Privacy regulations emphasize accountability by holding organizations responsible for safeguarding personal information. This involves implementing robust security measures, conducting regular audits, and providing individuals with mechanisms to exercise their rights over their data.

- Enhanced Transparency: Privacy regulations promote transparency by requiring organizations to provide clear and concise information about their data practices. This enables individuals to make informed decisions about sharing their personal information.

- Harmonization on a Global Scale: With the global nature of data flows, privacy regulations aim to harmonize standards across different jurisdictions. Efforts such as the European Union’s General Data Protection Regulation (GDPR) have set a benchmark for other countries in developing their own frameworks.

- Adaptability to Technological Advancements: Privacy regulations need to be adaptable to keep pace with technological advancements such as AI. As AI continues to evolve, new challenges may arise that require adjustments in regulatory frameworks.

These evolving privacy regulations play a crucial role in shaping how data is collected, processed, and shared in the age of AI while balancing individual rights with societal benefits.

Advancements in AI-Driven Security Solutions

Advancements in AI-driven security solutions have revolutionized the way data privacy and security are approached in the age of AI. With the increasing amount of data being generated and stored, traditional methods of security may not be sufficient to protect sensitive information. AI-driven surveillance systems have emerged as a powerful tool in detecting and preventing potential threats. These systems use advanced algorithms to analyze patterns, detect anomalies, and identify suspicious activities in real-time. By continuously monitoring network traffic, user behavior, and system logs, these AI-powered surveillance systems can quickly identify any unauthorized access or malicious activity. Additionally, AI has also played a significant role in enhancing data encryption techniques. Through its ability to process vast amounts of data quickly, AI can strengthen encryption algorithms by identifying vulnerabilities and developing more robust encryption methods that are resistant to attacks. Moreover, with advancements in machine learning algorithms, AI is able to adapt and learn from new threats, ensuring continuous improvement in data protection measures. The table below highlights the key benefits of advancements in AI-driven security solutions:

| Advancements in AI-Driven Security Solutions |

|---|

| Enhanced threat detection |

| Real-time monitoring |

| Improved anomaly detection |

| Continuous adaptation |

| Robust data encryption |

Building Trust and Transparency in AI Systems

To establish trust and transparency in AI systems, it is imperative to ensure a clear understanding of the decision-making processes employed by these systems. This can be achieved through various trust-building strategies and promoting transparency in algorithms.

Here are four key factors to consider:

- Explainability: AI systems should be able to provide explanations for their decisions in a clear and understandable manner. This includes providing insights into the underlying data, models, and inputs used by the system.

- Auditability: It is crucial to have mechanisms in place that allow for independent auditing of AI systems. This helps identify any biases or errors that may exist within the algorithms and ensures accountability.

- Data provenance: Transparency in data collection and usage is essential for building trust. Organizations should disclose how they collect, store, and use data, along with any potential risks associated with it.

- Ethical considerations: Trust can also be fostered by incorporating ethical principles into the design and implementation of AI systems. This involves ensuring fairness, privacy protection, and avoiding harm to individuals or groups.

By implementing these strategies and promoting transparency in algorithms, stakeholders can work towards establishing trust, addressing concerns related to bias or discrimination, and ultimately increasing acceptance of AI technologies among users who crave freedom from opaque decision-making processes.

Frequently Asked Questions

What are the potential risks and vulnerabilities associated with the use of AI in handling personal data?

Data breaches and vulnerabilities are significant risks associated with the use of AI in handling personal data. AI algorithms, while powerful and efficient, can also be susceptible to manipulation or exploitation by malicious actors. Learn more

Data breaches occur when unauthorized individuals gain access to sensitive information, such as personal data, which can lead to identity theft, financial fraud, or other forms of harm. Additionally, AI algorithms may unintentionally perpetuate biases or discrimination if not properly designed or audited.

These vulnerabilities pose a threat to individual privacy and highlight the need for robust security measures and ethical considerations when implementing AI systems for handling personal data. As technology continues to advance, it is crucial to address these risks proactively in order to safeguard user information and ensure that the benefits of AI are maximized while minimizing potential harms.

How can organizations ensure transparency and accountability in their AI systems when it comes to data privacy and security?

Transparency challenges and accountability measures are crucial for organizations to ensure the integrity of their AI systems in relation to data privacy and security.

Transparency is essential as it promotes openness and allows individuals to understand how their personal data is being used. To address transparency challenges, organizations can adopt practices such as providing clear and accessible explanations of their AI algorithms, disclosing the sources of training data, and making information about data processing easily available.

Additionally, accountability measures play a key role in ensuring that organizations take responsibility for the actions of their AI systems. This can be achieved by implementing robust governance frameworks that include mechanisms for auditing and monitoring AI systems, establishing clear lines of responsibility within the organization, and conducting regular risk assessments to identify potential vulnerabilities.

By addressing transparency challenges and implementing strong accountability measures, organizations can build trust with users and stakeholders while safeguarding data privacy and security in the age of AI.

Are there any specific ethical guidelines or frameworks that organizations should follow when using AI to handle sensitive data?

When using AI to handle sensitive data, organizations should adhere to specific ethical guidelines and frameworks to ensure ethical considerations and legal compliance.

These guidelines and frameworks serve as a roadmap for organizations, enabling them to navigate the complex landscape of AI ethics.

Ethical considerations are crucial in maintaining public trust and upholding societal values when handling sensitive data.

Organizations should prioritize transparency and accountability in their AI systems by providing clear explanations of how the data is collected, used, and protected.

Additionally, they should implement measures to minimize biases, discrimination, and unfair treatment that may arise from the use of AI algorithms.

Legal compliance is equally important to protect individuals’ privacy rights and prevent potential legal liabilities.

Organizations must comply with relevant laws, regulations, and industry standards pertaining to data protection, confidentiality, consent management, and security measures. Read more

By following these ethical guidelines and frameworks rigorously, organizations can ensure that their use of AI in handling sensitive data respects individual rights while fostering innovation and progress in a responsible manner.

How can individuals protect their personal data from unauthorized access or misuse in the context of AI-driven technologies?

Data breach prevention and privacy preserving technologies are crucial for individuals to protect their personal data from unauthorized access or misuse in the context of AI-driven technologies. To ensure data security, individuals should adopt robust measures such as strong passwords, multi-factor authentication, and encryption techniques.

Additionally, engaging in regular software updates and utilizing firewall protection can help safeguard personal information. Privacy preserving technologies like differential privacy or homomorphic encryption can also be employed to anonymize sensitive data while still allowing it to be used for analysis and AI applications.

By implementing these preventive measures and leveraging privacy-preserving technologies, individuals can mitigate the risks associated with unauthorized access or misuse of their personal data in an AI-driven world.

What are the potential limitations or challenges in implementing data privacy regulations in the age of AI, and how can these be overcome?

Data privacy challenges and limitations in implementing data privacy regulations in the age of AI are multifaceted. Firstly, one major challenge is the sheer volume and complexity of data generated by AI systems, making it difficult to effectively regulate and protect personal information.

Additionally, the lack of transparency and explainability in AI algorithms creates a barrier to understanding how personal data is being used and protected.

Furthermore, the potential for algorithmic bias poses a significant challenge to ensuring fair and equal treatment when handling personal information.

Overcoming these challenges requires a comprehensive approach that includes technological advancements such as developing robust encryption methods and anonymization techniques, as well as establishing clear guidelines for responsible AI development and usage.

Furthermore, fostering collaboration between industry stakeholders, policymakers, and privacy advocates can help navigate these complexities by promoting dialogue, sharing best practices, and developing effective regulatory frameworks that strike a balance between protecting individual privacy rights while enabling innovation in the age of AI.

Conclusion

In conclusion, the age of AI has brought about significant challenges and considerations in data privacy and security.

The relationship between data privacy and AI is complex, with AI technologies relying on vast amounts of personal data for their functioning. However, ensuring the safe and responsible use of data presents numerous challenges, such as protecting against unauthorized access, breaches, and misuse.

To address these challenges, various measures can be implemented. Organizations need to prioritize strong cybersecurity protocols and invest in robust encryption techniques to safeguard sensitive data. Additionally, implementing strict access controls and comprehensive training programs can help prevent internal breaches. Ethical considerations play a crucial role in balancing the benefits of AI with the protection of individual privacy rights. It is essential to establish transparent policies that govern data collection, usage, retention periods, and user consent.

Compliance with data privacy regulations is imperative to protect individuals’ rights while utilizing AI technologies. Organizations must adhere to legal frameworks such as the General Data Protection Regulation (GDPR) or California Consumer Privacy Act (CCPA). These regulations require organizations to obtain explicit consent from individuals before collecting their personal information and provide clear explanations regarding how their data will be used.

AI itself can also contribute positively by enhancing data privacy and security through automated threat detection systems, anomaly detection algorithms, and predictive analytics for identifying potential risks proactively. As advancements continue in AI technology, it is crucial to strike a balance between innovation and protecting individuals’ privacy.

Looking ahead into the future of data privacy in the age of AI requires ongoing vigilance from both policymakers and organizations alike. Establishing internationally coordinated efforts for regulating AI applications becomes increasingly important as this technology continues to evolve rapidly. By addressing these concerns head-on through collaboration between stakeholders across academia, industry experts, policymakers, ethicists, legal professionals, and advocacy groups we can work towards creating a future where individuals’ privacy remains protected without hindering technological advancements.AI